TEREVUE

Welcome to the future of geospatial data management – where big problems meet even bigger solutions.

Teren’s cloud-based, high-performance computing platform isn’t just a solution; it’s a game-changer. We fuse remotely-sensed data, real-time climate intel, and environmental insights to breath life into 3D environmental twins while setting new benchmarks for speed.

Large Geographies Are Our Speciality

We deliver greater value with a new standard for 3D data processing at speed and scale while providing greater accuracy and high point density for geographically large regions.

- Create an environmental digital twin of long linear assets or new project sites

- Infinitely scalable processing to meet the needs of any workload and timeline

- Fully classified, analysis-ready datasets generated within hours for 10,000+ sq mi

- Trusted, proven performance in civil, infrastructure, and government markets

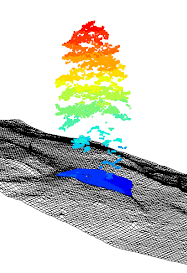

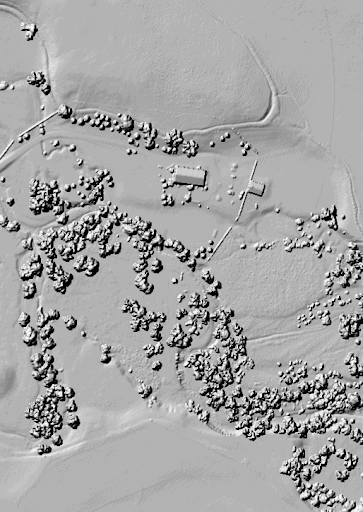

High-Density Point Cloud Data

High-Resolution 4-Band Imagery

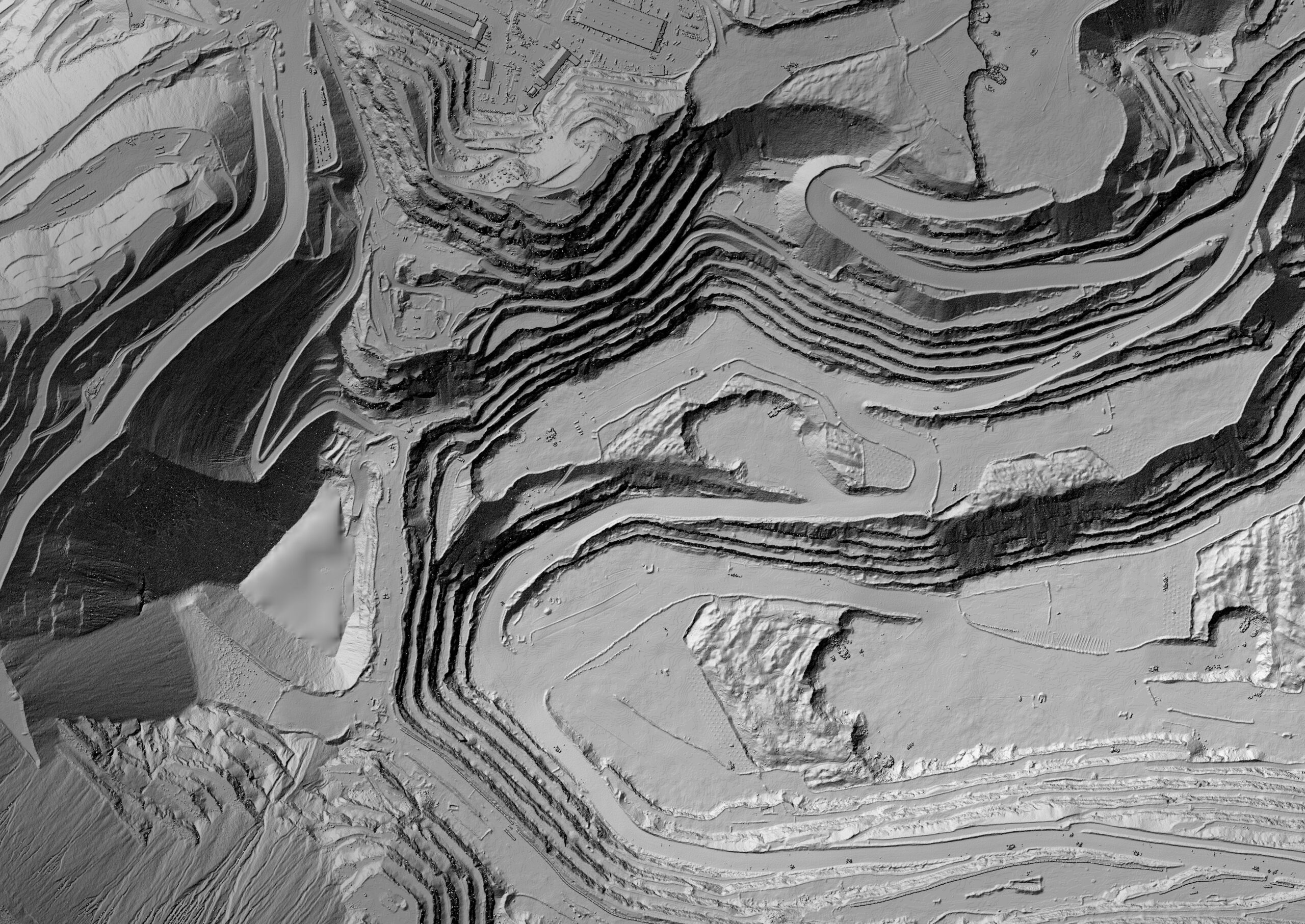

High-Fidelity Digital Surface Model

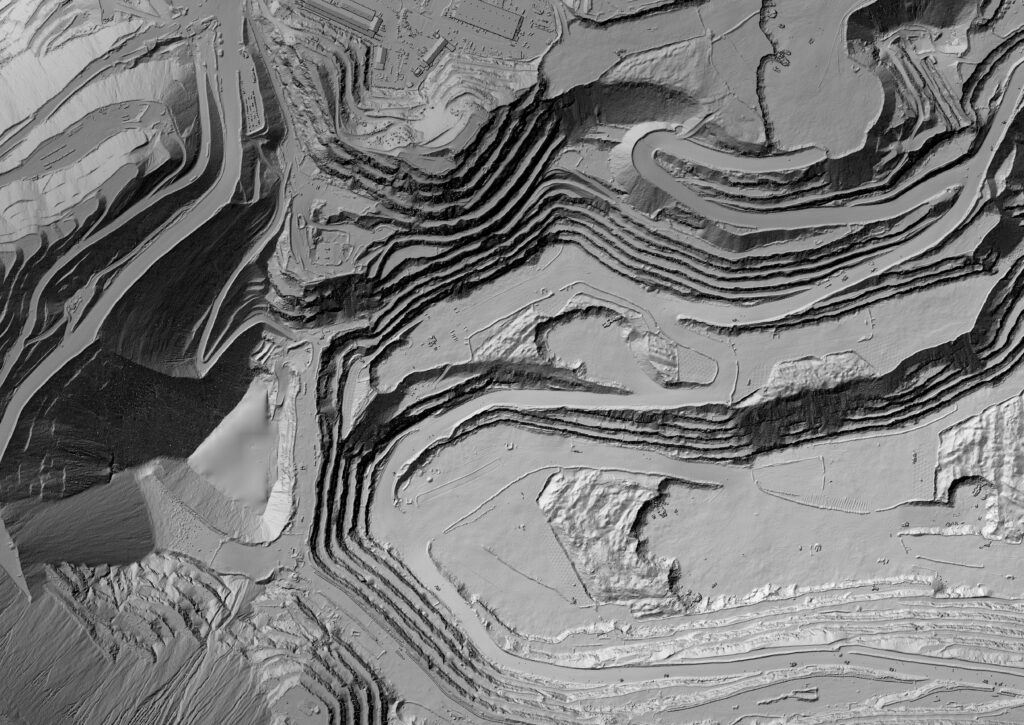

Temporal Digital Terrain Model

Why Teren?

With Teren, what used to take ages now happens in the blink of an eye.

Rapid Data Processing

Teren has created a streamlined, consistent and scalable approach to process remotely-sensed data faster than anyone.

Decision-Ready Analytics

Teren applies domain expertise and machine learning to deliver objective, repeatable, and accurate analytics.

Simple & Secure Delivery

Teren’s data is delivered via secure streaming services, integration with existing technology, or data as a download.

Data Retention & Accessibility

Teren provides access to a historical content library to evaluate changes to the environment over time.

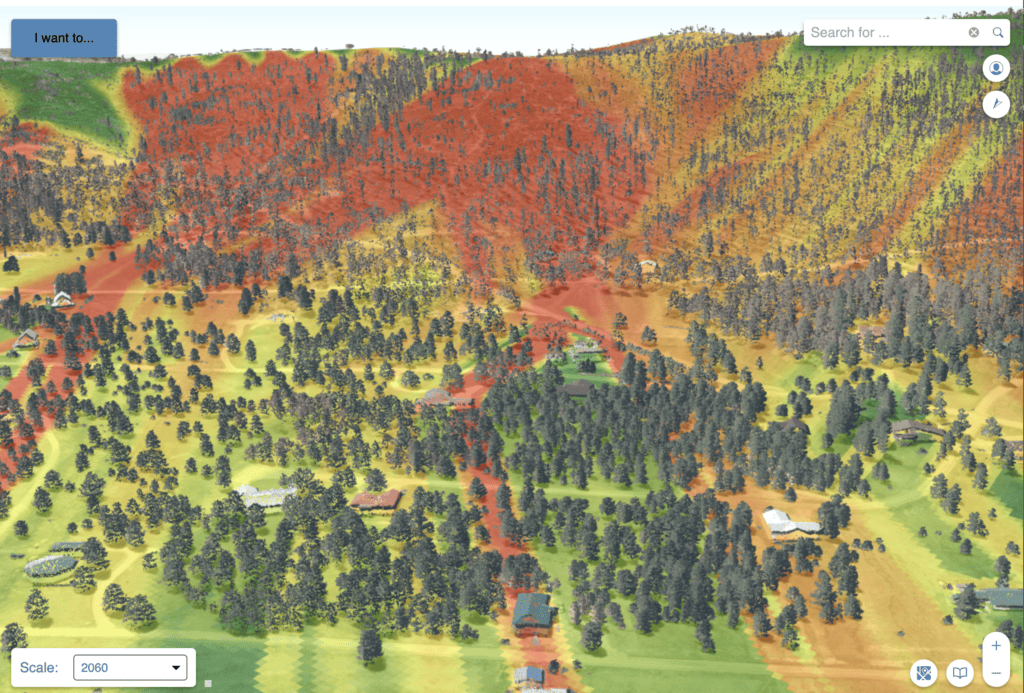

Case Study: Teren Processes & Analyzes 340,000+ acres of Burn Scar for Hermit’s Peak in Record Time

Teren’s approach to processing, calibrating and classifying LiDAR data enabled the USDA to assess 340,000+ acres of burn area from the Hermit’s Peak fire and prescribe treatments in less than four weeks.